Engineering Democracy Safely: The H.A.R.M.O.N.Y. Framework for Political Campaign Risk Management

Volume 8, Issue 3, July-September 2025

Engineering Democracy Safely: The H.A.R.M.O.N.Y. Framework for Political Campaign Risk Management

Dr. Senthilkumar Anantharaman

Email id: senthilkumara1851@gmail.com

Abstract

Political campaigns represent vibrant expressions of democracy, yet their large-scale

mobilisations frequently expose participants to significant safety hazards—structural,

electrical, vehicular, and crowd-related. Recent incidents, including the Karur rally disaster in

Tamil Nadu, have revealed systemic lapses in safety planning and inter-agency coordination

that demand urgent attention from both safety engineering and public policy perspectives. This

study extends safety engineering principles to the political domain by developing a conceptual

model—the H.A.R.M.O.N.Y. Framework (Hazard Analysis, Risk Mitigation, and Oversight

for Non-industrial Yields). Drawing from system safety literature, accident models such as

STAMP (Leveson, 2012), the Swiss cheese model (Reason, 1997), and contemporary risk

governance approaches, the framework provides a cyclical mechanism to anticipate, mitigate,

and learn from campaign-related accidents. Applying H.A.R.M.O.N.Y. retrospectively to the

Karur case highlights its potential to reduce preventable fatalities through structured hazard

identification, real-time monitoring, and organisational feedback loops. The study concludes

with strategic recommendations for institutionalizing safety engineering as a mandatory

dimension of democratic process management, proposing a Safety Certification System

analogous to environmental clearances in industrial sectors.

Keywords:

Safety, Hazard identification, Political campaign risk management, Risk categorization & Mitigation, Democracy safety, H.A.R.M.O.N.Y. framework, Electoral risk mitigation, Campaign security, Governance and accountability, Political process safety

Objective:

To provide a structured framework (H.A.R.M.O.N.Y.) for identifying, assessing, and mitigating risks in political campaigns, ensuring safe, transparent, and effective democratic processes.

1. Introduction

Political campaigns in India constitute some of the most logistically complex and densely

populated events in the world, seamlessly blending political fervour, extraordinary human

density, and infrastructural improvisation on scales rarely witnessed elsewhere (Kumar &

Singh, 2018). Each rally, motorcade, or roadshow temporarily transforms public spaces into

high-risk socio-technical systems, involving hastily constructed stages, improvised electrical

networks, complex traffic management scenarios, and unpredictable crowd flow dynamics.

Despite their frequency and the millions of participants they attract during election seasons,

systematic safety engineering protocols remain conspicuously absent from campaign planning

frameworks (NDMA, 2016; Rao & Mehta, 2019).

The historical record of disasters at political events, including electrocutions from campaign

vehicles, crowd stampedes, structural collapses of temporary platforms, and vehicle accidents,

has exposed severe gaps in design thinking, hazard anticipation, and preventive oversight

(Sharma, 2020). The mass casualty event at the Karur rally in 2025, where poor crowd control

and logistical failures resulted in a deadly stampede, highlights a profound flaw in systemic

safety management. This tragedy serves as a poignant and critical demonstration that the

pursuit of democratic engagement must be protected from fatal, preventable risks. This paper

argues that such accidents are not random failures or acts of fate but rather predictable system

level breakdowns that demand a structured safety engineering response. Drawing upon established accident causation models, particularly Reason’s (1997) “Swiss cheese model” of

organisational accidents and Leveson’s (2012) system-theoretic accident model and processes

(STAMP), we introduce the H.A.R.M.O.N.Y. Framework, a multi-layered, adaptive model

designed to embed safety engineering principles within the fabric of political campaign

management.

1.1 Research Objectives

This study pursues three interconnected objectives:

- To systematically analyse the nature and typology of safety hazards present in political

campaign environments through the lens of safety engineering - To develop a comprehensive, theoretically grounded framework (H.A.R.M.O.N.Y.) that

integrates hazard identification, risk assessment, mitigation strategies, and

organisational learning mechanisms - To demonstrate the framework’s applicability through retrospective analysis of the

Karur rally incident and propose policy mechanisms for institutionalising campaign

safety

1.2 Significance of the Study

The significance of this research extends beyond immediate safety concerns to address

fundamental questions about the engineering of democratic spaces. As Dekker (2011) argues,

complex systems failure often results not from individual errors but from drift in organisational

practices and the gradual normalisation of deviance. Political campaigns, with their compressed

timelines, political pressures, and volunteer-dependent operations, are particularly susceptible

to such drift. By applying rigorous safety engineering methodologies to this domain, we can

transform ad-hoc political gatherings into systematically managed events that honour both

democratic participation and human safety.

2. Literature Review

2.1 Safety Engineering Foundations and Systems Thinking

Safety engineering has traditionally evolved within industrial, aerospace, and nuclear sectors,

where design redundancy, hazard analysis, and risk management are codified through

international standards such as ISO 31000:2018, IEC 61508, and AS/NZS 4360 (Hopkins,

2014). These frameworks rest on fundamental principles: that accidents are preventable

through systematic analysis, that safety must be designed into systems rather than added

afterwards, and that organisational learning from near-misses and failures is essential for

continuous improvement (Leveson, 2012).

The evolution of safety thinking has progressed through several paradigmatic shifts. Early

safety engineering focused on component reliability and technical failures—what Hollnagel

(2014) terms “Safety-I,” characterised by reactive responses to things that go wrong.

Contemporary approaches, particularly resilience engineering, embrace “Safety-II” thinking,

which emphasises understanding how things go right and building adaptive capacity into

systems (Hollnagel, Woods, & Leveson, 2006).

Leveson’s (2012) STAMP (System-Theoretic Accident Model and Processes) represents a

significant advancement in safety thinking by moving beyond linear chain-of-events models to

recognise accidents as emergent properties of complex systems. STAMP emphasises control

structures, feedback loops, and the enforcement of safety constraints across organisational

hierarchies—concepts directly applicable to the multi-agency coordination required in

campaign management.

However, political gatherings are rarely conceptualised as engineered systems requiring formal

safety architecture. This gap in thinking has led to repeated incidents and a persistent inability

to learn from failures across different political contexts (Challenger, Clegg, & Robinson, 2009).

2.2 Accidents in Political and Mass Gathering Contexts

The academic literature on mass gathering safety has primarily focused on religious

pilgrimages, sporting events, and music festivals rather than political campaigns (Memish et

al., 2012; Still, 2014). Studies of events such as the Hajj pilgrimage, Kumbh Mela, and major

concerts demonstrate that accidents often result from organisational complexity, inadequate

crowd modelling, and weak control structures rather than individual negligence (Helbing,

Johansson, & Al-Abideen, 2007).

Political campaigns share similar dynamics with these mass gatherings but differ in critical

ways that exacerbate risk profiles. Unlike planned festivals with dedicated infrastructure,

political rallies involve voluntary participation, ad-hoc infrastructure, intense political pressure,

compressed planning timelines, and frequently, a culture of risk tolerance driven by

competitive electoral dynamics (Rao & Mehta, 2019). The temporary nature of campaign

structures, combined with the symbolic importance of crowd size in political messaging,

creates perverse incentives that prioritise mobilisation over safety.

Challenger et al. (2009) identified key factors in crowd-related incidents, including inadequate

ingress and egress planning, information failures, and breakdown in crowd-authority

communication. Their work emphasised that crowd disasters typically result from systemic

failures rather than crowd irrationality, a finding that aligns with modern safety engineering’s

emphasis on system design over individual blame.

2.3 Organizational Safety Culture and High-Reliability Organizations

The concept of safety culture, originally developed in response to major industrial disasters

like Chernobyl and the space shuttle Challenger accident, emphasises that organisational

attitudes, values, and practices fundamentally shape safety outcomes (Reason, 1997; Vaughan,

1996). High-reliability organisations (HROs)—such as aircraft carriers and nuclear power

plants—maintain extraordinary safety records despite operating in high-risk environments by

cultivating specific organisational characteristics: preoccupation with failure, reluctance to

simplify interpretations, sensitivity to operations, commitment to resilience, and deference to

expertise (Weick & Sutcliffe, 2015).

Political campaign organisations rarely exhibit HRO characteristics. Campaign teams typically

prioritise political messaging, logistics, and mobilisation, with safety considerations relegated

to minimal police coordination. The absence of dedicated safety professionals in campaign

planning teams, combined with the temporary nature of campaign organisations, prevents the

development of institutional safety memory (Sharma, 2020).

2.4 Risk Governance and Multi-Agency Coordination

Contemporary risk governance frameworks recognise that managing complex risks requires

coordination across multiple organisational boundaries, stakeholder engagement, and

integration of diverse knowledge systems (Renn, 2008). The International Risk Governance

Council (IRGC) framework emphasises the need for systematic risk assessment, evaluation,

management, and communication—all elements conspicuously absent in current campaign

management practices.

Multi-agency coordination presents particular challenges in campaign contexts where authority

is distributed among political organisations, police departments, local governments, electricity

boards, traffic management agencies, and emergency medical services. Without clear command

structures and communication protocols, these agencies often operate in silos, creating

dangerous gaps in safety coverage (NDMA, 2016).

2.5 Gap in Literature: Engineering-Based Frameworks for Campaign Safety

While the NDMA (2016) provides valuable crowd management guidelines focused primarily

on law enforcement perspectives, there remains a critical gap in engineering-based frameworks

specifically applicable to campaign logistics. Existing industrial safety models assume formal

work systems with clear authority, documentation, and boundaries—characteristics often

absent in political campaigns. What is needed is a framework that integrates human factors

engineering, organisational safety culture, and systemic learning mechanisms while remaining

flexible enough to adapt to the fluid, politically charged environment of campaigns.

This study addresses this gap by developing the H.A.R.M.O.N.Y. Framework, which

synthesises insights from multiple safety disciplines into a unified model suitable for transient

public systems.

3. Methodological Approach

This paper adopts a conceptual systems engineering approach that combines multiple analytical

methodologies to create a comprehensive safety framework. The methodology integrates

theoretical development with practical application through retrospective case analysis.

3.1 Research Design

The research follows a qualitative, model-building approach grounded in established safety

engineering methodologies:

Failure Mode and Effects Analysis (FMEA): Used for systematically identifying and

classifying potential hazards in campaign environments, assessing their severity, likelihood,

and detectability (Stamatis, 2003). This method provides structured templates for hazard

documentation and prioritisation.

System-Theoretic Process Analysis (STPA): Applied for modelling control structures, feedback

mechanisms, and safety constraint enforcement across organisational boundaries (Leveson,

2012). STPA’s strength lies in identifying hazardous control actions and inadequate feedback

loops before they manifest as accidents.

Root Cause Analysis (RCA) and Post-Event Reviews (PER): Employed for learning integration

and capturing institutional knowledge from incidents. RCA techniques, including the “5 Whys”

and fishbone diagrams, help identify systemic causes beyond proximate triggers (Rooney &

Heuvel, 2004).

Comparative Analysis: Drawing insights from analogous high-risk systems, including aviation

ground operations, concert venue management, and construction site safety to identify

transferable safety principles.

3.2 Validation Approach

The research follows a qualitative validation approach, applying the developed framework

retrospectively to the Karur rally incident as an illustrative case. This retrospective application

serves multiple purposes: demonstrating the framework’s analytical utility, identifying specific

failure points that the framework would address, and illustrating how systematic application

could have prevented the tragedy.

The objective is not empirical measurement of incident rates or statistical validation but rather

model-based reasoning for policy and design reform. This approach aligns with conceptual

modelling traditions in safety engineering, where frameworks are first developed theoretically,

then refined through practical application and expert validation (Leveson, 2012).

3.3 Methodological Contribution

The present study contributes methodologically to safety engineering in three distinct ways—

conceptual extension, systemic synthesis, and domain adaptation—each expanding the

boundaries of traditional safety research.

Conceptual Extension of Safety Engineering Principles: Traditional safety methodologies such

as HAZOP (Hazard and Operability Study), FMEA, and STPA are designed for industrial

systems where operations, roles, and environments are well defined, stable, and repeatable.

Political campaigns, by contrast, represent temporary, open, and socio-technically unstable

systems characterised by fluid authority structures, volunteer workforces, and compressed

timelines. The H.A.R.M.O.N.Y. Framework extends these established methods into such non

industrial contexts by creating a flexible, modular structure that adapts the rigour of

engineering analysis to dynamic, people-centred environments. This conceptual extension

transforms safety engineering from a primarily technical field into a socio-technical systems

discipline capable of governing fluid human systems.

Systemic Synthesis Across Multi-Disciplinary Inputs: The framework synthesises insights

from systems theory (Leveson, 2012), organisational accident modelling (Reason, 1997),

resilience engineering (Hollnagel, 2014), and risk governance (Renn, 2008) into a unified

model suitable for transient public systems. It integrates both top-down hazard control

(engineering standards and regulatory oversight) and bottom-up behavioral adaptation

(learning feedback and organizational memory), producing a closed-loop control architecture

that balances prescriptive and adaptive safety mechanisms. This synthesis ensures that each

H.A.R.M.O.N.Y. component—Hazard identification, Assessment, Risk mitigation,

Monitoring, Oversight, Normalization, and Yield—forms a recursive learning cycle rather than

a linear compliance checklist.

Domain Adaptation to Non-industrial, High-Density Systems: Most existing safety

methodologies assume formal work systems with clear hierarchies, documented procedures,

and defined boundaries. Political rallies and campaign events lack these attributes, operating

instead through informal networks, political urgency, and volunteer coordination. The

methodological innovation here lies in adapting engineering-level controls to socio-political

systems through proxy parameters such as crowd density metrics, stage load calculations,

vehicle clearance assessments, and inter-agency communication reliability measures. Thus, the

framework provides a transferable methodological bridge from industrial process safety to

democratic process safety—a novel conceptual space not yet codified in safety science

literature.

Overall, the H.A.R.M.O.N.Y. model’s methodological novelty lies in its ability to transform

unregulated, ephemeral events into auditable safety systems through an engineering mindset.

By fusing analytical rigor with democratic inclusivity, it reframes political campaign

management as a legitimate object of safety engineering inquiry and intervention.

4. The H.A.R.M.O.N.Y. Framework: A Safety Engineering Architecture

4.1 Conceptual Definition and Philosophy

H.A.R.M.O.N.Y. extends the system safety paradigm to non-industrial, high-density political

environments. The framework’s philosophy is grounded in two complementary principles:

safety by design and learning by reflection. The name itself embodies the framework’s

aspirational goal: achieving harmony among human enthusiasm, technical infrastructure, and

environmental constraints within democratic spaces.

The framework recognises that political campaigns represent complex adaptive systems where

safety emerges from the interaction of multiple components rather than from any single control

mechanism.

Following STAMP’s system-theoretic approach (Leveson, 2012), H.A.R.M.O.N.Y. emphasises:

- Control structures that enforce safety constraints across organisational boundaries

- Feedback loops that enable real-time adaptation and post-event learning

- Hierarchical coordination that balances local autonomy with systemic oversight

- Constraint enforcement that maintains safety boundaries without stifling democratic

expression

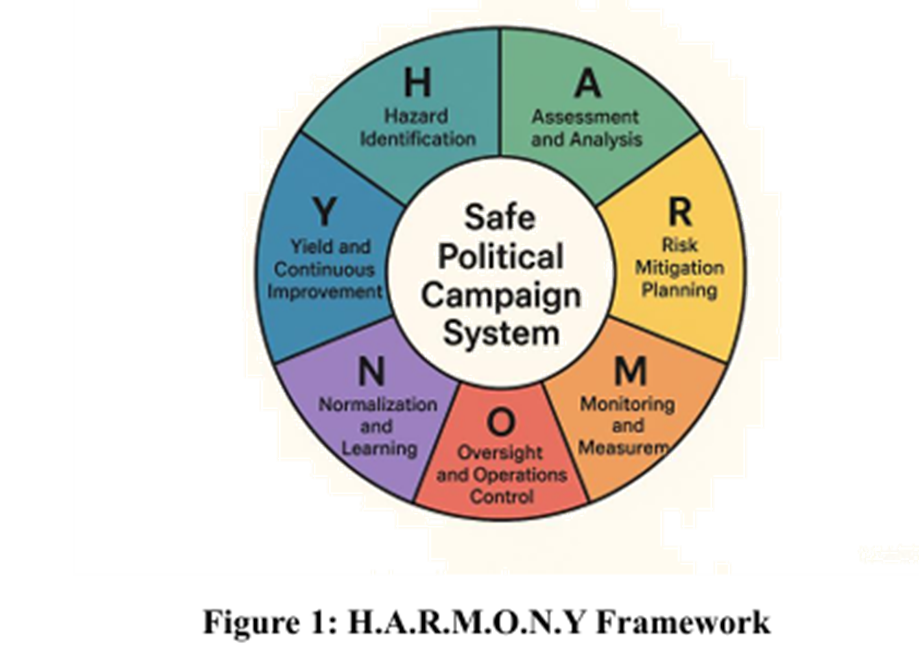

The Framework is shown in Figure 1.

4.2 Framework Components: Detailed Exposition

H – Hazard Identification

Definition: Systematic recognition of structural, electrical, meteorological, vehicular, and

crowd-related hazards through comprehensive site surveys and route assessments.

Theoretical Foundation: Drawing from FMEA methodologies, this phase involves proactive

identification of failure modes before they manifest. Hazard identification must be

comprehensive, covering all phases of the campaign event lifecycle from planning through

post-event dispersal (Stamatis, 2003).

Key Activities in Political Campaigns:

- Conduct pre-event electrical mapping using GIS technology to identify overhead power

lines, transformers, and substations along procession routes - Perform structural audits of temporary stages, platforms, and scaffolding using load

calculation standards - Assess meteorological forecasts for weather-related hazards including lightning, high

winds, and extreme temperatures - Evaluate crowd flow patterns using site topology analysis and historical attendance data

- Identify vehicular hazards including route obstacles, traffic conflict points, and

emergency access routes

Implementation Requirements:

- Deploy multi-disciplinary hazard identification teams including electrical engineers,

structural engineers, and crowd safety specialists - Utilize technology tools including drone surveys, GIS mapping platforms, and 3D

modelling software - Establish standardized hazard checklists specific to political campaign contexts

- Create hazard registers documenting identified risks with photographic evidence and

GPS coordinates

A – Assessment and Analysis

Definition: Quantitative and qualitative evaluation of identified hazards to determine severity,

probability, and overall risk level.

Theoretical Foundation: Risk assessment methodologies from ISO 31000:2018 provide

structured approaches to evaluating consequences and likelihoods. The assessment phase

transforms identified hazards into prioritized risks requiring intervention (ISO, 2018).

Key Activities:

- Apply FMEA scoring systems assigning severity (S), occurrence (O), and detection (D)

ratings to each identified hazard - Calculate Risk Priority Numbers (RPN = S × O × D) to prioritize mitigation efforts

- Conduct STPA analysis to identify unsafe control actions and inadequate feedback

mechanisms - Document findings in a comprehensive campaign safety register with visual risk

matrices - Engage stakeholder workshops to validate risk assessments and incorporate local

knowledge

Risk Categorisation:

- Critical risks: Potential for multiple fatalities or mass casualties (e.g., structural

collapse, major electrical hazards) - Serious risks: Potential for significant injuries or limited fatalities (e.g., crowd crushes,

vehicle accidents) - Moderate risks: Potential for minor injuries or property damage (e.g., minor electrical

shocks, trip hazards) - Low risks: Minimal injury potential but requiring documentation (e.g., audio equipment

failure, minor weather impacts)

R – Risk Mitigation Planning

Definition: Development and implementation of engineered, administrative, and behavioural

controls to eliminate or minimize identified hazards.

Theoretical Foundation: The hierarchy of controls—elimination, substitution, engineering

controls, administrative controls, and personal protective equipment—provides a systematic

approach to risk reduction (NIOSH, 2015).

Key Activities:

- Apply insulation standards to all metallic campaign materials including flagpoles,

sound equipment, and decorative structures - Conduct structural stability testing using engineering calculations for load-bearing

capacity - Implement crowd density controls through physical barriers, multiple entry/exit points,

and capacity limits - Establish clear height restrictions for campaign vehicles with visual markers and

measuring devices - Develop emergency response protocols including evacuation procedures and medical

support positioning - Create administrative controls including safety briefings, permission systems, and

safety officer appointments

Mitigation Strategies by Hazard Type: - Electrical hazards: Route modification, insulated materials, clearance enforcement,

power line burial or insulation - Structural hazards: Professional engineering design, load testing, anchoring systems,

redundant support structures - Crowd hazards: Flow modelling, capacity limits, real-time density monitoring, trained

stewards - Vehicular hazards: Speed limits, professional drivers, vehicle inspection, designated

pedestrian zones

M – Monitoring and Measurement

Definition: Real-time observation and documentation of risk indicators, safety compliance, and

emerging hazards throughout the event lifecycle.

Theoretical Foundation: Dynamic risk management requires continuous monitoring with

feedback mechanisms enabling rapid response to changing conditions (Hopkins, 2014).

Key Activities:

- Deploy IoT-based sensors for structural stress monitoring on temporary platforms

- Utilize drone surveillance for crowd density assessment and real-time flow pattern

analysis - Implement crowd analytics using computer vision technology to detect dangerous

density levels - Install weather monitoring stations providing real-time alerts for meteorological

hazards - Position safety observers at critical control points with direct communication to

command centres - Maintain continuous electrical monitoring of isolation distances and grounding systems

Technology Integration:

Sensor networks transmitting data to centralized dashboards

- Mobile applications enabling distributed safety reporting by stewards

- Real-time communication systems linking field observers to command centres

- Automated alert systems triggering interventions when parameters exceed safety

thresholds

O – Oversight and Operations Control

Definition: Coordination of multi-agency safety teams through clear command protocols and

communication structures.

Theoretical Foundation: Incident Command System (ICS) principles provide proven

frameworks for managing complex multi-agency operations (FEMA, 2008). Effective

oversight requires clear authority, defined responsibilities, and structured communication.

Key Activities:

- Establish Safety Command Cells integrating engineers, police, medical services, and

event organisers - Implement Unified Command structures with clear decision-making authority

- Develop Standard Operating Procedures (SOPs) for various hazard scenarios

- Conduct pre-event coordination meetings with all stakeholder agencies

- Maintain Operations Centres with real-time communication to field teams

- Assign designated safety officers with authority to halt operations if critical safety

thresholds are breached

Organisational Structure:

- Safety Commander: Senior official with overall authority for safety decisions

- Technical Advisory Team: Engineers providing hazard assessment and mitigation

guidance - Operations Coordinators: Managing implementation of safety protocols

- Communications Officers: Ensuring information flow across organisational boundaries

- Medical Coordinators: Positioning emergency medical resources

- Crowd Management Teams: Implementing flow control and density management

N – Normalisation and Learning

Definition: Systematic capture, analysis, and institutionalisation of lessons learned from both

incidents and successful operations.

Theoretical Foundation: Organisational learning theory emphasises that sustained safety

improvement requires converting experience into institutional knowledge (Argyris & Schön,

1978). Learning must operate at both single-loop (correcting errors) and double-loop

(questioning assumptions) levels.

Key Activities:

- Conduct mandatory Post-Event Reviews (PER) within 48 hours of all campaign events

- Utilise structured incident investigation methodologies, including Root Cause Analysis

- Document near-misses and good practices alongside actual incidents

- Share safety reports with election authorities and political parties

- Maintain centralized safety databases enabling cross-campaign learning

- Conduct periodic safety audits reviewing compliance with established protocols

Learning Mechanisms: - Incident databases: Searchable repositories of safety events with causal analysis

- Best practice libraries: Documentation of successful safety innovations

- Safety bulletins: Regular dissemination of safety lessons to campaign organisations

- Training programs: Integration of lessons learned into safety officer certification

- Academic partnerships: Research collaboration improving theoretical understanding

Y – Yield and Continuous Improvement

Definition: Systematic evaluation of safety performance outcomes to drive iterative refinement

of safety systems and protocols.

Theoretical Foundation: Plan-Do-Check-Act (PDCA) cycles, originating from quality

management but widely applied in safety management, provide structured approaches to

continuous improvement (Deming, 2000).

Key Activities:

- Apply PDCA cycles to campaign safety management systems

- Develop Key Performance Indicators (KPIs) measuring safety outcomes including

incident rates, near-miss frequencies, and compliance metrics - Conduct annual reviews of the H.A.R.M.O.N.Y. framework effectiveness

- Benchmark safety performance across regions and campaign organizations

- Integrate emerging technologies and methodologies into the framework

- Establish feedback mechanisms enabling frontline safety personnel to propose

improvements

Performance Metrics:

- Leading indicators: Safety audits completed, training hours delivered, hazards

identified and mitigated - Lagging indicators: Incidents per event, injury rates, property damage costs

- Process indicators: Time to incident response, stakeholder coordination effectiveness

- Cultural indicators: Safety reporting rates, near-miss disclosure frequency

4.3 The H.A.R.M.O.N.Y. Cycle: Systemic Integration

The H.A.R.M.O.N.Y. framework operates as a closed-loop control system, integrating hazard

anticipation, active control, and learning feedback in a continuous cycle:

Pre-Event Phase: Hazard Identification → Risk Assessment → Mitigation Planning

Event Phase: Monitoring and Measurement → Oversight and Operations Control

Post-Event Phase: Normalisation and Learning → Yield and Continuous Improvement →

(feeds back to next event’s Hazard Identification)

This cyclical structure ensures that each campaign event both benefits from accumulated

institutional knowledge and contributes new insights to the evolving safety knowledge base.

The framework explicitly rejects linear, checklist-based approaches in favour of adaptive

learning systems that recognise the complexity and uniqueness of each campaign context.

5 Case Application: The Karur Rally under H.A.R.M.O.N.Y. Analysis

To demonstrate the framework’s diagnostic and preventive utility, we apply H.A.R.M.O.N.Y.

retrospectively to the Karur rally incident, identifying specific failures at each phase and

proposing concrete corrective measures.

On September 27, 2025, a rally organised by a new political party in Karur, Tamil Nadu, turned

into a devastating stampede that claimed the lives of 41 women and injured scores of others.

What began as a promise of welfare and empowerment ended in tragedy, as a surge of

thousands, many from economically weaker sections, rushed toward the distribution counters,

only to be trapped in a space that had no safe exit.

The tragedy was not a random occurrence; it was the predictable outcome of systemic neglect.

Preliminary investigations revealed that the venue lacked capacity assessment, barricade

design approval, and real-time crowd monitoring. Many women had travelled long distances,

waiting for hours under oppressive heat. As confusion spread and the temporary roof structures

began to collapse, panic cascaded through the crowd.

Several victims were mothers and daily wage earners, their only intent being to collect welfare

tokens meant to support their families. In their final moments, they became silent witnesses to

the failure of safety governance, a failure that blurred the line between administrative oversight

and moral responsibility

5.1 The Human Context of Systemic Failure

The Karur 2025 incident reminds us that safety engineering, at its core, is not only about

calculating risks but about protecting lives. Each victim represents a point of data that should

have existed in a risk model, a heatmap, or an evacuation plan. Yet, their absence in planning

transformed a civic gathering into a site of collective trauma.

In this sense, engineering must rediscover its ethical spine: designing systems that acknowledge

vulnerability empathy as functional variables, not afterthoughts.

The H.A.R.M.O.N.Y. framework responds to this moral imperative by embedding human centered principles into every stage of risk management, from hazard detection to continuous learning

5.2 Application of the H.A.R.M.O.N.Y. Framework

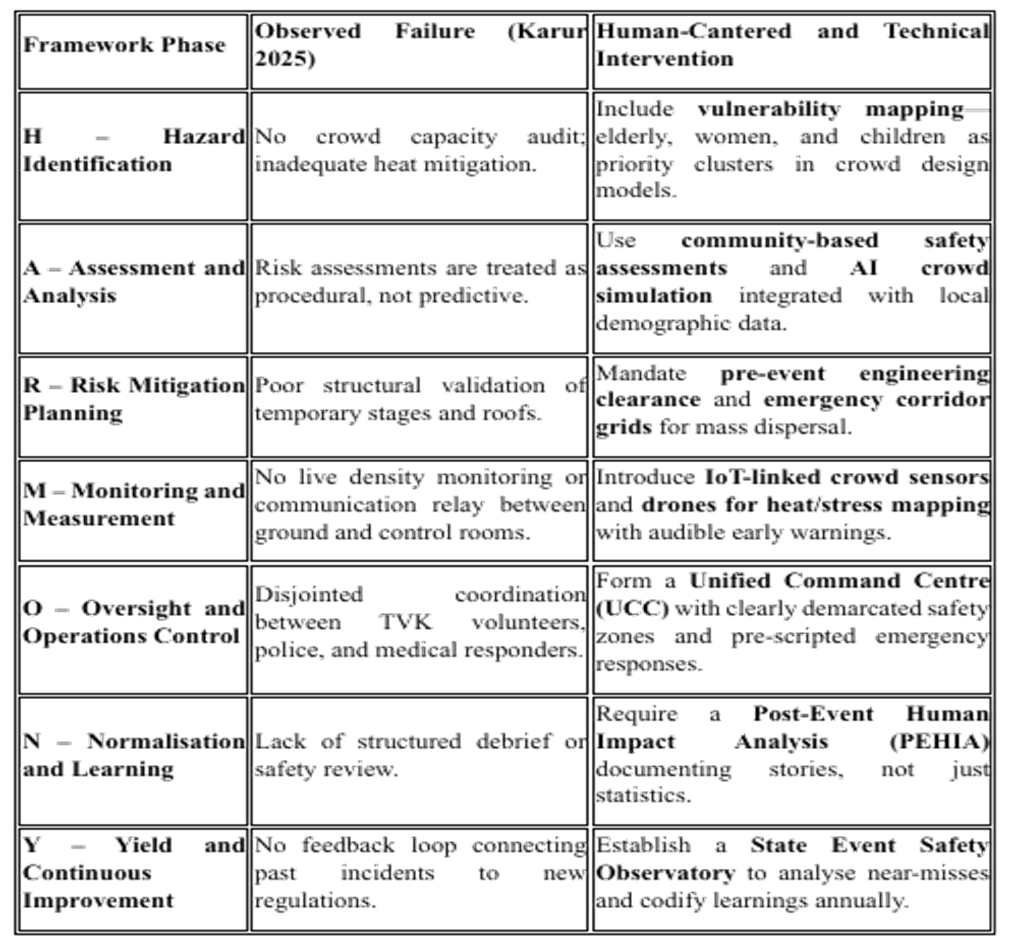

This tragedy thus becomes a mirror for our times: while political campaigns symbolise democracy in motion, they must also reflect democracy in protection. The H.A.R.M.O.N.Y. framework urges policymakers and engineers alike to move from a reactive culture of condolence to a proactive culture of care, where human dignity anchors every safety plan. (Refer to Figure 2)

6. Integration with Global Standards and Frameworks

The H.A.R.M.O.N.Y. framework does not exist in isolation but rather builds upon and

integrates with established international safety and risk management standards. This integration

ensures compatibility with global best practices while maintaining flexibility for local

adaptation.

6.1 ISO 31000:2018 Risk Management

The framework’s risk assessment and mitigation components align directly with ISO 31000’s

principles, emphasising integrated, structured, comprehensive, and customised approaches to

risk management (ISO, 2018). H.A.R.M.O.N.Y. operationalises ISO 31000’s high-level

principles for the specific context of political campaigns

6.2 NDMA Guidelines on Crowd Management (2016)

India’s National Disaster Management Authority provides foundational crowd management

guidelines focused primarily on law enforcement perspectives. H.A.R.M.O.N.Y. extends these

guidelines by integrating engineering analysis, technical hazard assessment, and organisational

learning mechanisms that complement law enforcement functions.

6.3 WHO Mass Gathering Health Risk Assessment (2021)

The World Health Organisation’s framework for assessing health risks at mass gatherings

provides valuable methodologies for medical planning and public health surveillance.

H.A.R.M.O.N.Y. incorporates these health dimensions while expanding the scope to

encompass electrical, structural, and systemic organisational risks.

6.4 Leveson’s STAMP (2012)

The framework’s emphasis on control structures, feedback loops, and system-level thinking

directly derives from STAMP’s system-theoretic approach. H.A.R.M.O.N.Y. applies STAMP’s

principles to the specific domain of political campaigns, recognising accidents as emergent

properties of inadequate constraint enforcement across organisational boundaries.

6.5 IEC 61508 Functional Safety

While developed for industrial electrical systems, IEC 61508’s approach to safety integrity

levels and systematic hazard analysis provides relevant methodologies for assessing electrical

risks in campaign environments. H.A.R.M.O.N.Y. adapts these technical standards to

temporary political infrastructure, establishing equivalent safety requirements appropriate to

the campaign context.

By bridging technical safety standards, risk governance frameworks, and mass gathering

guidelines, H.A.R.M.O.N.Y. translates abstract safety principles into practical democratic

infrastructure management protocols. This integration ensures the framework benefits from

decades of safety engineering evolution while remaining accessible to political organisations

and election authorities.

7. Limitations and Future Research Directions

7.1 Study Limitations

This conceptual study acknowledges several limitations that constrain claims and suggest

caution in interpretation:

Retrospective analysis: Applying the framework retrospectively to past incidents differs from

prospective implementation, where real-world political pressures, resource constraints, and

organisational dynamics introduce complexities not fully captured in conceptual modelling.

Generalizability questions: The framework develops within Indian political contexts; its

applicability to different political systems, campaign cultures, and regulatory environments

requires careful adaptation rather than direct transfer.

Implementation feasibility: While the study proposes implementation mechanisms, actual

political will, resource availability, and institutional capacity for implementation remain

uncertain and context-dependent.

Quantitative validation absence: The study provides a qualitative framework development

without statistical validation of effectiveness metrics, cost-benefit quantification, or controlled

intervention studies demonstrating incident reduction.

Single case application: While the Karur incident provides illustrative value, broader

empirical validation across multiple incidents, regions, and political contexts would strengthen

framework robustness.

8 Future Research Directions

These limitations suggest productive research directions for advancing campaign safety

knowledge:

Empirical Validation Studies

Prospective intervention research: Implementing H.A.R.M.O.N.Y. in selected campaign

contexts with rigorous evaluation comparing safety outcomes, incident rates, and near-miss

frequencies against control groups using conventional practices.

Multi-case comparative analysis: Systematically analysing diverse campaign incidents

across regions, political parties, and event types to identify patterns, validate framework

components, and refine hazard taxonomies.

Longitudinal studies: Tracking safety performance across multiple election cycles to assess

learning effectiveness, organisational culture evolution, and sustainability of safety

improvements.

Technological Development Research

Sensor system development: Engineering research developing purpose-built sensor

technologies optimised for temporary campaign infrastructure, balancing cost, reliability, and

deployment simplicity.

Crowd modelling advancement: Computational research improving crowd flow prediction,

density estimation, and behaviour modelling specific to political rally contexts differing from

sporting events or concerts.

Decision support systems: Developing AI-powered safety decision tools integrating hazard

databases, weather forecasting, and real-time monitoring to provide actionable

recommendations to Safety Command Cells.

Behavioural and Organizational Research

Safety culture assessment: Investigating factors influencing safety culture development in

political organizations, identifying barriers and enablers for cultural transformation.

Multi-agency coordination dynamics: Examining inter-organizational communication,

power dynamics, and coordination mechanisms in campaign safety management to optimize

collaboration structures.

Participant behaviour studies: Understanding crowd behaviour, risk perception, and safety

compliance among campaign participants to inform more effective communication and

intervention strategies.

Policy Analysis and Comparative Studies

Regulatory framework comparison: Analysing campaign safety regulations across countries

and states to identify effective policy mechanisms and implementation models.

Cost-benefit quantification: Rigorous economic analysis quantifying prevention costs versus

incident costs, insurance implications, and optimal resource allocation strategies.

Legal framework development: Jurisprudence research addressing liability questions,

regulatory authority, and legal mechanisms for enforcing campaign safety standards within

democratic rights frameworks.

Ethical and Democratic Theory Research

Safety-democracy balance: Philosophical examination of tensions between safety

requirements and democratic expression, developing principled frameworks for balancing

competing values.

Equity implications: Analysing how safety regulations affect different political parties,

especially resource-constrained organisations, and developing mechanisms ensuring safety

doesn’t become barrier to democratic participation.

Rights framework integration: Legal and political theory research integrating safety

engineering into rights-based frameworks recognising both political participation rights and

rights to safe public spaces.

9 Conclusions

This study has developed and articulated the H.A.R.M.O.N.Y. Framework, as a comprehensive

safety engineering architecture specifically designed for political campaign environments. By

extending established safety engineering principles from industrial contexts to democratic

spaces, the framework addresses a critical gap in public safety governance. Through this article,

we make several significant contributions to safety engineering theory and practice:

Conceptual innovation: Extending safety engineering’s boundaries into socio-political

systems, demonstrating that democratic processes constitute legitimate objects of systematic

safety analysis and intervention.

Methodological synthesis: Integrating diverse safety methodologies—FMEA, STPA, Root

Cause Analysis, and continuous improvement cycles—into a unified framework adapted to

temporary, high-density political environments.

Practical applicability: Providing actionable guidance through detailed component

descriptions, implementation mechanisms, and retrospective case application demonstrating

diagnostic utility.

Policy framework: Proposing concrete regulatory mechanisms including Safety Certification

Systems, Election Commission integration, and legal framework development that translate

conceptual models into institutional realities.

Cross-disciplinary bridge: Connecting safety engineering, political science, public

administration, and democratic theory in productive dialogue, advancing both safety practice

and democratic theory.

Further, H.A.R.M.O.N.Y.’s deeper significance lies in reconceptualising democratic

infrastructure itself. Political campaigns represent not merely logistical events but foundational

democratic rituals through which citizens exercise political agency. When these spaces become

sites of preventable injury and death, democracy itself suffers.

Safety engineering must evolve beyond factories and highways to safeguard the spaces where

citizens gather to shape collective futures. The H.A.R.M.O.N.Y. framework demonstrates that

this evolution is both necessary and achievable—that we can harmonise human enthusiasm

with engineered foresight, political expression with protective design.

Applying such frameworks to political campaigns can reduce preventable accidents, enhance

institutional trust in democratic processes, and redefine political participation as a domain of

responsible engineering ethics rather than improvised risk-taking. In an era where political

mobilisation occurs instantly and massively, designing safety into democracy is not optional—

it is an obligation to citizens, to democratic principles, and to the fundamental precept that

participation in governance should never cost lives.

This study concludes with urgent recommendations for multiple stakeholder groups, i.e.,

Election authorities should integrate campaign safety into regulatory frameworks, leveraging

existing institutional mechanisms to establish certification systems and oversight structures.

Engineering professional bodies should develop specialised competencies in democratic

event safety, creating certification programs and ethical guidelines for engineers working in

political contexts.

Academic institutions should establish research programs investigating campaign safety,

training future safety professionals, and contributing technical expertise to practical

implementation.

Government agencies should allocate resources for safety infrastructure development,

recognising campaign safety as an essential public good worthy of public investment.

Civil society organisations should advocate for safety reforms, monitor implementation, and

ensure accountability for safety failures.

The Karur tragedy and countless similar incidents demonstrate that current approaches fail

democratic values and endanger citizens. The H.A.R.M.O.N.Y. framework provides a path

forward, one requiring commitment, resources, and political will, but promising transformative

improvements in how democracies manage the inherent risks of mass political participation.

References

- Argyris, C., & Schön, D. A. (1978). Organizational learning: A theory of action perspective. Reading, MA: Addison-Wesley.

- Challenger, R., Clegg, C., & Robinson, M. (2009). Understanding crowd behaviours:

- Supporting evidence. UK Cabinet Office Emergency Planning College.

- Dekker, S. (2011). Drift into failure: From hunting broken components to understandingcomplex systems. Farnham, UK: Ashgate.

- Deming, W. E. (2000). Out of the crisis. Cambridge, MA: MIT Press.

- FEMA. (2008). National Incident Management System. Washington, DC: Federal Emergency Management Agency.

- Helbing, D., Johansson, A., & Al-Abideen, H. Z. (2007). Dynamics of crowd disasters: An empirical study. Physical Review E, 75(4), 046109.

- Hollnagel, E. (2014). Safety-I and Safety-II: The past and future of safety management.

- Farnham, UK: Ashgate.

- Hollnagel, E., Woods, D. D., & Leveson, N. (2006). Resilience engineering: Concepts and precepts. Aldershot, UK: Ashgate.

- Hopkins, A. (2014). Issues in safety science. Safety Science, 67, 6-14.

- ISO. (2018). ISO 31000:2018 Risk management — Guidelines. Geneva: International Organization for Standardization.

- Kumar, R., & Singh, A. (2018). Political mobilization and public safety in Indian elections.

- Journal of South Asian Development, 13(2), 178-201.

- Leveson, N. (2012). Engineering a safer world: Systems thinking applied to safety. Cambridge, MA: MIT Press.

- Mevish, Z. A., Zumla, A., Alhakeem, R. F., Assiri, A., Turkestani, A., Al Harby, K. D., … & Al Tawfiq, J. A. (2012). Hajj: Infectious disease surveillance and control. The Lancet, 383(9934), 2073-2082.

- NDMA. (2016). Guidelines on crowd management. New Delhi: National Disaster Management Authority, Government of India.

- NIOSH. (2015). Hierarchy of controls. Cincinnati, OH: National Institute for Occupational Safety and Health.

- Rao, M. S., & Mehta, P. (2019). Safety gaps in mass political gatherings: An Indian perspective.

- International Journal of Disaster Risk Reduction, 36, 101089.

- Reason, J. (1997). Managing the risks of organizational accidents. Aldershot, UK: Ashgate.

- Renn, O. (2008). Risk governance: Coping with uncertainty in a complex world. London: Earthscan.

- Rooney, J. J., & Heuvel, L. N. V. (2004). Root cause analysis for beginners. Quality Progress, 37(7), 45-56.

- Sharma, V. (2020). Political campaign accidents in India: A systematic review. Asian Journal of Public Administration, 42(3), 234-251.

- Stamatis, D. H. (2003). Failure mode and effect analysis: FMEA from theory to execution (2nd ed.). Milwaukee, WI: ASQ Quality Press.

- Still, G. K. (2014). Introduction to crowd science. Boca Raton, FL: CRC Press.

- Vaughan, D. (1996). The Challenger launch decision: Risky technology, culture, and deviance at NASA. Chicago: University of Chicago Press.

- Weick, K. E., & Sutcliffe, K. M. (2015). Managing the unexpected: Sustained performance in a complex world (3rd ed.). Hoboken, NJ: Wiley.

- WHO. (2021). Mass gathering health risk assessment tools. Geneva: World Health Organization.